Discrete Probability Distribution Overview

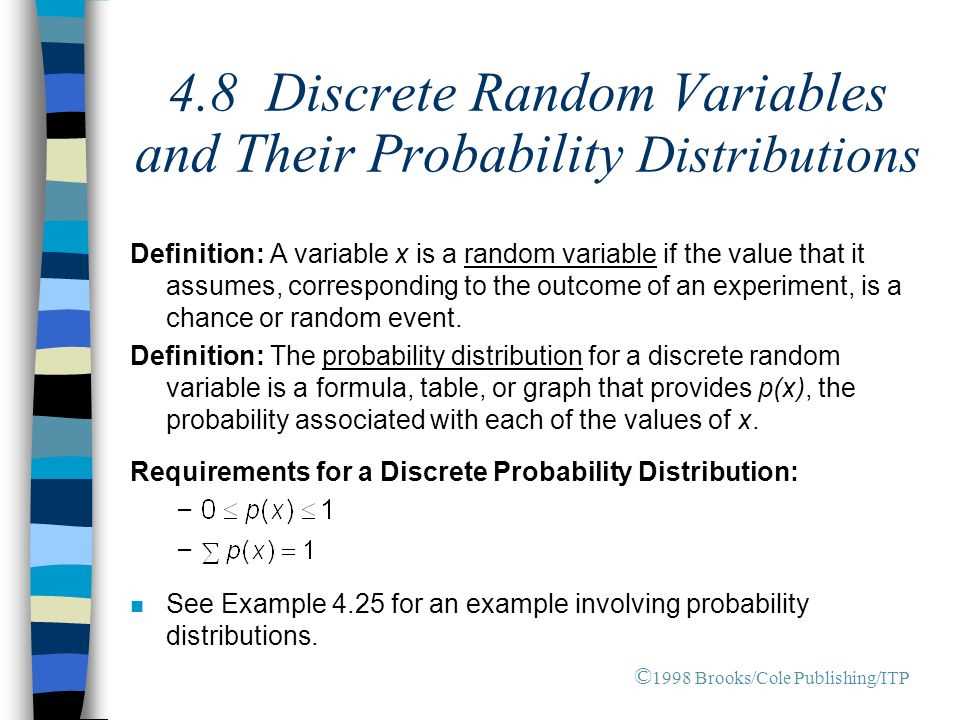

A discrete probability distribution is a statistical concept that describes the likelihood of different outcomes in a discrete random variable. Unlike continuous probability distributions, which deal with continuous random variables, discrete probability distributions deal with variables that can only take on a finite or countable number of values.

Discrete probability distributions are often represented using probability mass functions (PMFs) or probability density functions (PDFs). PMFs assign probabilities to each possible outcome, while PDFs assign probabilities to intervals of values.

Basic Concepts

There are several key concepts to understand when working with discrete probability distributions:

- Random Variable: A random variable is a variable that can take on different values with certain probabilities. In the context of discrete probability distributions, the random variable can only take on a finite or countable number of values.

- Probability Mass Function (PMF): The probability mass function assigns probabilities to each possible outcome of a random variable. It is often denoted as P(X = x), where X is the random variable and x is a specific value.

- Cumulative Distribution Function (CDF): The cumulative distribution function gives the probability that a random variable takes on a value less than or equal to a given value. It is denoted as P(X ≤ x).

- Variance: The variance measures the spread or dispersion of a discrete probability distribution. It is calculated by summing the squared differences between each possible outcome and the expected value, weighted by their corresponding probabilities.

Examples of Discrete Probability Distributions

There are many examples of discrete probability distributions, including:

- Poisson Distribution: The Poisson distribution models the number of events that occur in a fixed interval of time or space, given the average rate of occurrence.

- Hypergeometric Distribution: The hypergeometric distribution models the number of successes in a fixed number of draws without replacement from a finite population, where each draw has different probabilities of success.

These are just a few examples, and there are many other discrete probability distributions that are used in various fields of study.

| Random Variable (X) | Probability (P(X = x)) |

|---|---|

| 0 | 0.2 |

| 1 | 0.3 |

| 2 | 0.4 |

| 3 | 0.1 |

Definition and Basic Concepts

A discrete probability distribution is a statistical distribution that represents the probabilities of different outcomes in a discrete set of possible values. It is used to model random variables that can only take on specific values, rather than a continuous range of values.

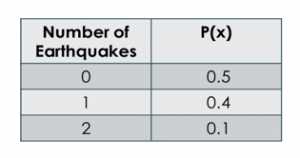

One of the key concepts in a discrete probability distribution is the probability mass function (PMF). The PMF gives the probability of each possible outcome in the distribution. It is often represented as a table or a graph, with the possible outcomes listed along with their corresponding probabilities.

Another important concept is the expected value or mean of the distribution. The expected value is the average value of the random variable, weighted by their respective probabilities. It represents the long-term average outcome if the experiment or process is repeated many times.

Standard deviation is also used to measure the spread or variability of the distribution. It provides a measure of how much the values in the distribution deviate from the expected value.

| Outcome | Probability |

|---|---|

| 1 | 0.2 |

| 2 | 0.3 |

| 3 | 0.5 |

In the above example, we have a discrete probability distribution with three possible outcomes: 1, 2, and 3. The probability of each outcome is given in the second column. The sum of all the probabilities is 1, as required.

Examples of Discrete Probability Distributions

A discrete probability distribution is a mathematical function that assigns probabilities to each possible outcome of a discrete random variable. Here are some examples of discrete probability distributions:

1. Bernoulli Distribution

The Bernoulli distribution is a discrete probability distribution that models a single trial with two possible outcomes: success (usually denoted as 1) or failure (usually denoted as 0). The probability of success, denoted as p, remains constant for each trial. Examples of Bernoulli distributions include flipping a coin (success = heads, failure = tails) or a single roll of a fair die (success = rolling a specific number, failure = rolling any other number).

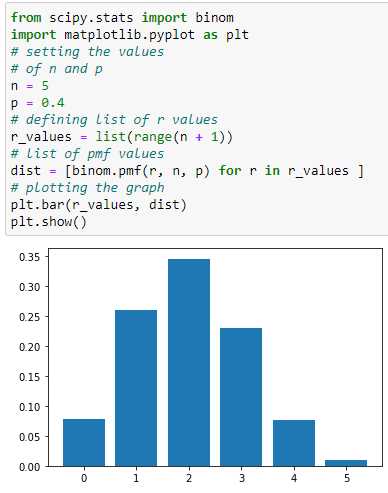

2. Binomial Distribution

The binomial distribution is a discrete probability distribution that models a series of independent Bernoulli trials. It is characterized by two parameters: the number of trials, denoted as n, and the probability of success in each trial, denoted as p. The binomial distribution calculates the probability of obtaining a specific number of successes in a fixed number of trials. Examples of binomial distributions include the number of heads obtained from flipping a coin multiple times or the number of defective items in a sample.

3. Poisson Distribution

The Poisson distribution is a discrete probability distribution that models the number of events occurring in a fixed interval of time or space. It is characterized by a single parameter, denoted as λ (lambda), which represents the average rate of occurrence of the events. The Poisson distribution calculates the probability of obtaining a specific number of events in the given interval. Examples of Poisson distributions include the number of phone calls received in an hour or the number of accidents occurring in a day.

4. Geometric Distribution

The geometric distribution is a discrete probability distribution that models the number of trials needed to achieve the first success in a series of independent Bernoulli trials. It is characterized by a single parameter, denoted as p, which represents the probability of success in each trial. The geometric distribution calculates the probability of achieving the first success on a specific trial. Examples of geometric distributions include the number of times a coin must be flipped to obtain the first heads or the number of attempts needed to win a game.

Emily Bibb simplifies finance through bestselling books and articles, bridging complex concepts for everyday understanding. Engaging audiences via social media, she shares insights for financial success. Active in seminars and philanthropy, Bibb aims to create a more financially informed society, driven by her passion for empowering others.